About Us This website is outdated. Please follow: https://cmilab.org/aichip/

Conscious awareness plays a major role in human cognition and adaptive behavior, though its function in multisensory integration is not yet fully understood, hence, questions remain:

- How does the brain integrate the incoming multisensory signals with respect to different external environments?

- How are the roles of these multisensory signals defined to adhere to the anticipated behavioral-constraint of the environment?

CMI lab with its world-class multidisciplinary team of cognitive scientists, neuroscientists, mathematicians, and engineers is focused on understanding the cellular mechanisms of mental life, including conscious experience, cognitive control, and learning.

Our recently launched collaborative doctoral partnership (CDP) and research programme in collaboration with the Massachusetts Institute of Technology (MIT), University of Oxford, and Nottingham Trent University (NTU) uniquely combine fundamental engineering, computer science, and computational neuroscience research, to conduct a novel and truly innovative research, addressing open AI challenges, which are well beyond the capabilities of today’s state-of-the-art, and are bottleneck to enable future cutting-edge brain-inspired AI technologies.

Our focused areas are:

- Conscious multisensory integration

- Biophysical and hardware-efficient neural models

- Explainable artificial intelligence

- Information processing in neurodegenerative diseases

- Real-time optimized resource management

- Natural language processing

- Context-aware/ autonomous decision-making

- Low power deep machine learning

- Neuromorphic chips

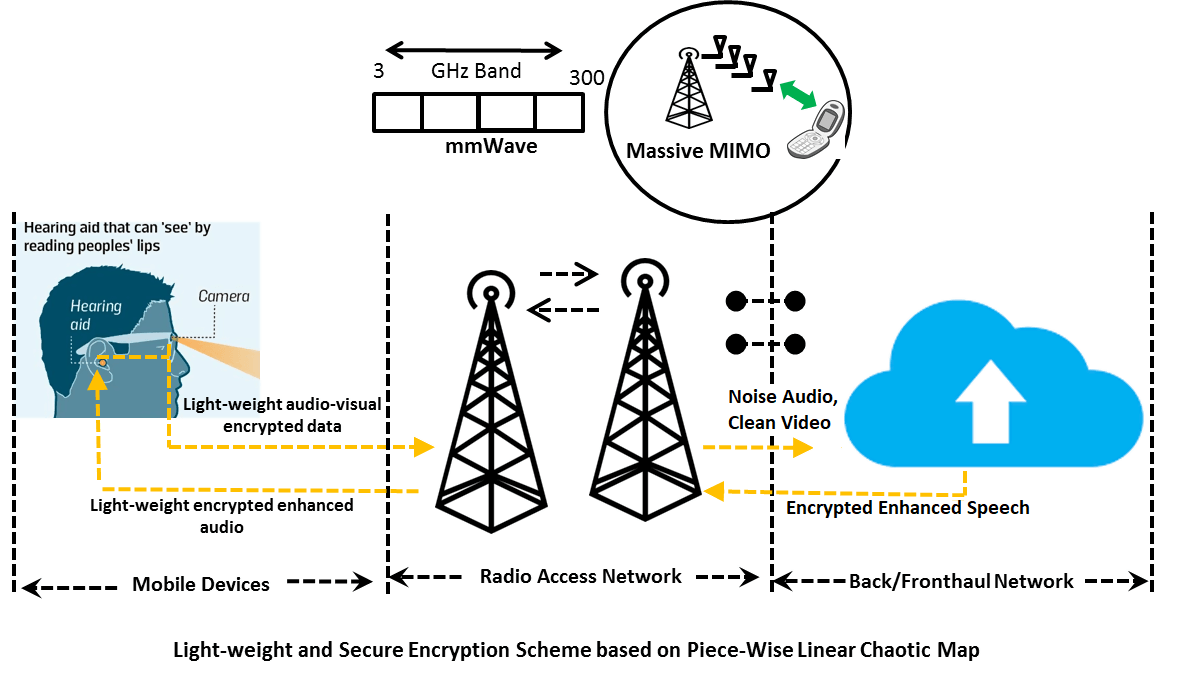

Developing world’s first cognitively-inspired, 5G-IoT enabled multi-modal hearing aid

Our proposed transformative idea of 5G IoT enabled audio-visual hearing aid (HA) has won the EPSRC £4 million transformative healthcare technologies programme grant (starting June 2020). This grant will help us to further develop our ambitious pioneering/ world's first, privacy-preserving multimodal (MM) HA by 2050. The EPSRC believes that our proposed idea is truly transformative, with impact transforming healthcare, community care, home care and an ageing workforce by the year 205. Our visionary project represents a step-change in how healthcare will be delivered in future.

IEEE World Congress on Computational Intelligence (WCCI) 2020, Glasgow, Scotland, UK, 19-24 July 2020

IEEE WCCI 2020 is the flagship conference of the IEEE Computational Intelligence Society, and the world's largest technical event in the field of computational intelligence. The IEEE WCCI 2020 will host three conferences: The 2020 International Joint Conference on Neural Networks (IJCNN 2020), the 2020 IEEE International Conference on Fuzzy Systems (FUZZ-IEEE 2020), and the 2020 IEEE Congress on Evolutionary Computation (IEEE CEC 2020) under one roof.

Collaborative Doctoral Partnership with MIT and University of Oxford to develop a more natural human-like computing system with enhanced situational awareness.

The gap between humans and machine is shrinking and scientists are trying to develop more human-like computing devices. It is becoming increasingly important to develop computer systems that incorporate situation awareness. However, the methods used to reduce margins of uncertainty and minimize miscommunication need further exploration. In this research, we aim to further develop our understanding of cognition and its emergence over development and evolution to realize human-like computing. Our ongoing work involves the development of evolved neural models, inspired by human cognition to serve broader goals, and further informed by biological and psychological models of competence. These novel neural networks will be used to build accurate driver behavioral models (e.g. driverless cars) for precise maneuvering decisions in different situations (e.g. blind spot, car reversing etc.).

Collaboration with the Computational Neurosciences and Cognitive Robotics Laboratory aims to jointly work on understanding contextual multisensory integration in the brain and develop low-power brain-computer interface (BCI) for accurate decision making.

Collaboration with the Nuffield Department of Surgical Sciences aims to jointly work on understanding neurodegenerative processes in Alzheimer’s and Parkinson’s diseases using novel computational models and biomedical methods

Sensory impairments have an enormous impact on our lives and are closely linked to cognitive functioning. Neurodegenerative processes in Alzheimer’s disease (AD) and Parkinson’s disease (PD) affect the structure and functioning of neurons, resulting in altered neuronal activity. For example, patients with AD suffer from sensory impairment and lack the ability to channelize awareness. However, the cellular and neuronal circuit mechanisms underlying this disruption are elusive. Therefore, it is important to understand how multisensory integration changes in AD/PD, and why patients fail to guide their actions.